Light Field Video Sequence by Nagoya University

Three

test sequence

(NagoyaFujita, NagoyaOrigami, NagoyaDataLeading)

are

provided by

Fujii Laboratory

Department of Information and

Communication Engineering

Graduate

School of Engineering, Nagoya University

Term of Use: ANY kind of publication or report using this sequence MUST refer to the following TWO references.

[1] Mehrdad Teratani, Shu Fujita, Kazuyoshi Suzuki, Toshiaki Fujii,

“[MPEG-I

Visual] Nagoya University Three New Test Sequences Captured by Light Field

Video Camera”,

ISO/IEC

JTC1/SC29/WG11, M47642, Geneva, Switzerland, March 2019.

[2]

http://www.fujii.nuee.nagoya-u.ac.jp/multiview-data/

Copyright: ONLY Available for Academic Usage

Production: Nagoya University

Capturing Condition:

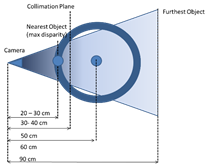

We have placed objects close to the camera as shown in Fig. 1

(right), so the disparity among views is distinguishable.

The detail of the capturing condition is in Fig. 1 (left).

The moving blue-doll is at collimation plane that should have

disparity equal to 0 (about 40 cm from camera).

The nearest object is placed at about 40 cm from the camera.

Fig. 1. Capturing (left) scene, and (right) condition.

Specification of the Camera and the Main Lens:

Here, we introduce the specification of the focused plenoptic camera, and the main lens, used for the

capturing.

Fig. 2 shows the camera and lens used in this capturing.

Tables 1 and 2 show the specifications of the Raytrix [2, 3] camera and the main lens,

LMVZ166HC (by Kowa) [7],

respectively.

The setting of the main lens is: focal length = 16mm, F-stop:

F2.8.

Fig. 2. Camera (Raytrix R5-C-

GigE-F2.4 (color)), and lens (LMVZ166HC (by Kowa)) used for capturing.

Table 1. Raytrix specification.

|

Product

code (model number) |

R5-C- GigE-F2.4 |

|

Resolution |

2048 (H) x

2048 (V) 4 million pixels |

|

Image

sensor |

Progressive

scan CMOS 1 inch CMOSIS CMV 4000 |

|

Shutter |

Global

shutter |

|

Pixel size |

5.5 μm × 5.5 μm |

|

Frame Rate

(Raw) |

25 fps @

Dual GigE |

|

Resolution

after reconstruction |

Approximately

1 million pixels |

|

Rx microlens |

F 2.4 |

|

Number of

pixels @ microlens diameter |

23 pixels /

1 microlens |

|

Number of

depth layers |

Approximately

90 layers |

|

Depth of field

|

Approximately

6 times the usual lens |

|

Lens mount |

C mount |

|

Output

interface |

Dual GigE |

|

Viewer

software |

RxLive software (refocus, all focus, 3D, multi view, stereo display) |

|

SDK |

4D Light

Field SDK (separately) |

|

Usage environment

|

MS -

Windows 7, CUDA, OpenGL 3.0, Compute Capability |

|

Onboard GPU

|

Nvidia CUDA GPU GeForce GTX-980 or higher recommended |

|

Power

supply |

Power

supply from external power supply |

Table 2. Main lens specification

|

Focal Length |

16 - 64mm (4x) |

|

Focal Length Sort Order |

016 |

|

Lens Type |

Varifocal |

|

Image Size |

1" (12.8 x 9.6 x

16mm) |

|

Iris Range (F-Stop) |

F1.8 – 16 |

|

Focusing Range |

1.0m |

|

Filter Thread Size |

M58x0.75 |

|

Mount |

C-mount |

Test Sequence:

Below, in Figures 3 and 4 we show the captured image (color),

and the viewpoint (center view) from multiview images generated by RLC

(Reference Lenslet Convertor [8]).

Note that the captured data has 25fps, while the arranged data is

set to 30fps.

The parameters of the captured data are as follows:

Raw

Video Data

Resolution:

2048x2048 pixels

Color:

24 bits PNG, and YUV420

Frame

rate: 30 fps

Number

of frames: 368 - 400

Camera

parameters that are given by (SDK output)

Distance

from the center of the image to the center of the central microlens

Diameter, and rotation angle of microlens, and lens array, respectively.

Depth

information for the three type of the mircolenses

Distance

between different types of microlenses

The

thickness of the microlens boundary line

Lenses

Distortions

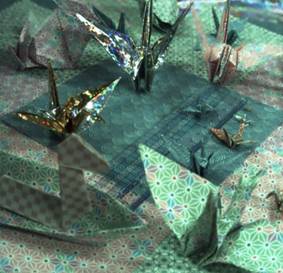

Fig. 3. Captured

color images by Raytrix, R5-C- GigE-F2.4. (left to right: NagoyaFujita, NagoyaOrigami, NagoyaDataLeading)

test sequences.

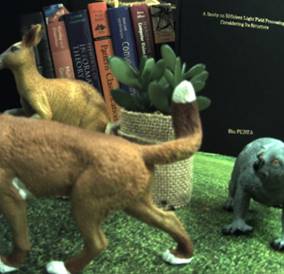

Fig. 4. Multiview

images generated by conversion tool from lenslet to

multiview video [8] from lenslet images.

Download Test Data:

Raytrix SDK output, xml: Download

Camera Parameter

Reference

[1] Mehrdad Panahpour Tehrani, Sho Mikawa, Yuto Kobayashi, Shu

Fujita, Keita Takahashi, Toshiaki Fujii: “[MPEG-I

Visual] Development of a 3D Imaging System Using Light Field Camera and Tensor

Display”, ISO/IEC JTC1/SC29/WG11 MPEG2017/ / M41244, July 2017, Torino, Italy.

[3] Christian Perwaß and Lennart Wietzke: “Single Lens 3D-Camera with Extended

Depth-of-Field”, proceedings of

SPIE - The International Society for Optical Engineering 8291:4- · February

2012.

[4] Gordon Wetzstein, Douglas Lanman, Matthew Hirsch, Ramesh Raskar:

“Tensor Displays: compressive light field synthesis using multilayer displays

with directional backlighting”, TOG, vol. 31, no. 4, 2012.

[5] Ren Ng, Marc Levoy, Mathieu Bredif, Gene Duval, Mark Horowitz, Pat Hanrahan: “Light

Field Photography with a Handheld Plenoptic Camera”,

Stanford University Computer Science Tech Report CSTR, vol. 2, no. 11, 2005.

[6] Todor Georgiev,

and Andrew Lumsdaine: “Focused Plenoptic

Camera and Rendering”, Journal of Electronic Imaging, vol. 19, no 2, 2010.

[7] http://www.kowa-optical.co.jp/fa/e/

[8] Mehrdad Teratani, Shu Fujita, Wenzhe Ouyang, Keita Takahashi, Toshiaki Fujii, “3D Imaging System Using Multi-Focus Plenoptic Camera and Tensor Display, “

Proc. International Conference on 3D Immersion (IC3D2018), Brussels,

Belgium (2018.12).

Acknowledgment

This

work is partially supported by Grant-in-Aid for Scientific Research (C)

registered number 16K06349.